Kirton McConkie shareholder Julie Crane was a featured speaker at the Organized Intelligence 2025 Conference: Latter-day Saint Perspectives on AI, held November 4–5, 2025, at the Church Office Building in Salt Lake City. Supported by the Future of Life Institute and partner organizations, the sold-out gathering brought together more than 600 scholars, technologists, faith leaders, policymakers, and creatives to explore the ethical, social, and religious dimensions of artificial intelligence.

Organized Intelligence is a new initiative led by Medlir Mema, Zachary Davis (Faith Matters), and Will Jones (Future of Life Institute). The two-day event convened distinguished voices in technology, academia, and faith communities—including Elder Gerrit W. Gong, Elder Kim B. Clark, Terryl Givens, and leading AI researchers and policymakers—to examine how religious traditions can contribute to responsible AI development and governance.

Julie Crane on the Evolving Legal Landscape of AI

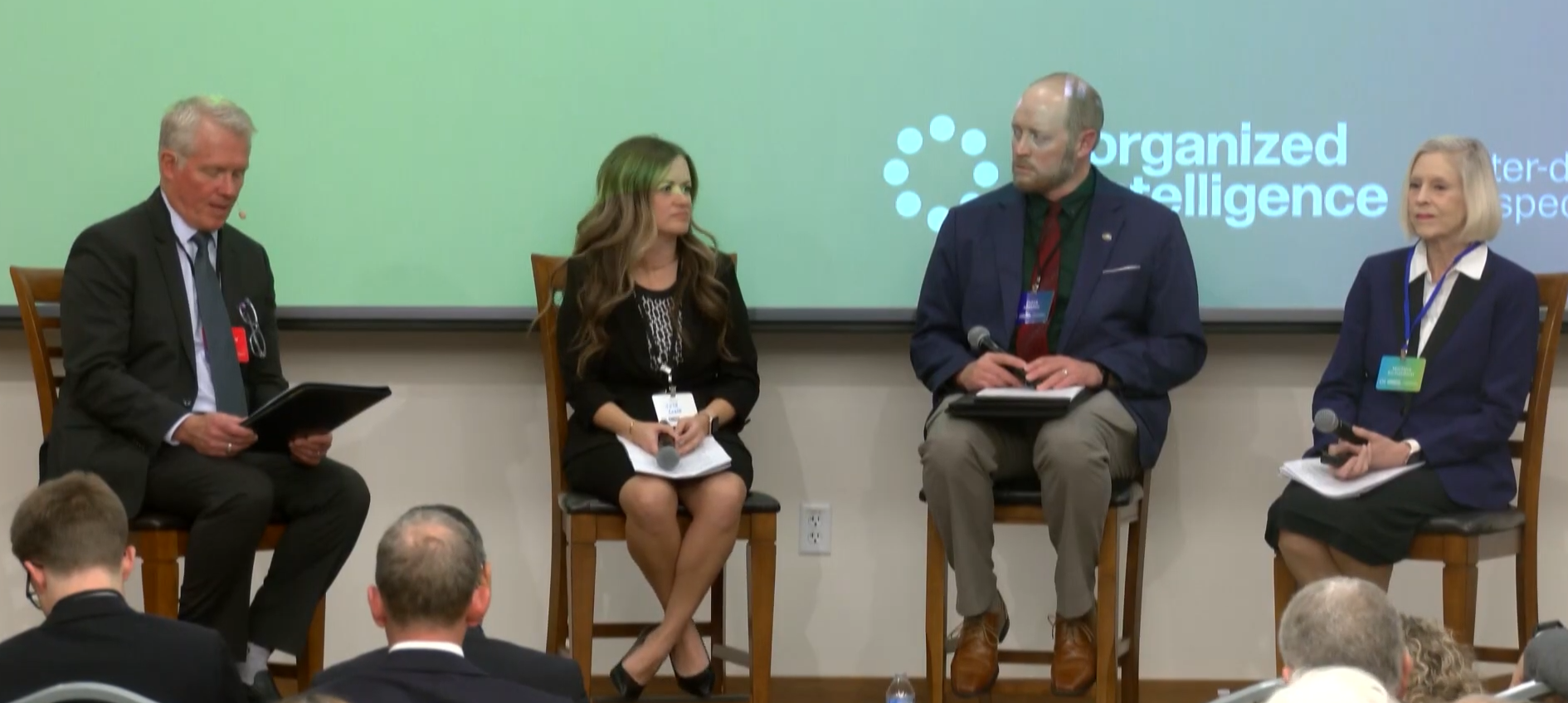

Crane delivered a well-received presentation on “The Evolving Legal Landscape of AI Governance,” offering a comprehensive overview of how rapidly advancing technology is driving significant regulatory activity in the United States and around the world. She also participated in the conference’s opening panel, “Faith, Ethics, and AI,” alongside experts in public policy, law, and interfaith collaboration.

In her remarks, Crane addressed common misconceptions about AI oversight and highlighted the acceleration of global AI regulation:

- AI is not being deregulated. Instead, laws are expanding at an unprecedented pace.

- Existing legal frameworks in consumer protection, privacy, intellectual property, and anti-discrimination already apply to AI.

- U.S. lawmakers have introduced over 1,000 AI-related bills in 2025, a dramatic increase from prior years.

- More than 69 countries have proposed over 1,000 AI governance measures globally.

Crane explained two major regulatory models emerging worldwide:

- Comprehensive frameworks, such as the EU AI Act, which regulate AI across use cases; and

- Targeted, sector-based laws, more common in the United States, including recent measures in Utah, Colorado, California, Tennessee, Texas, and international approaches such as China’s deep-synthesis regulations.

Drawing parallels to the evolution of privacy law, Crane described how AI governance is moving rapidly toward enforceable standards, with substantial penalties for noncompliance. She emphasized that new laws aim to regulate the entire AI value chain, from developers to deployers and end users, in response to the growing consolidation of foundation-model providers.

Kirton McConkie’s Leadership in AI, Technology, and Ethics

Crane’s participation underscores Kirton McConkie’s growing role in advising clients on AI governance, emerging technologies, regulatory compliance, and ethics across sectors. The firm’s attorneys continue to contribute thought leadership at the intersection of law, innovation, and public policy, helping organizations navigate complex legal frameworks while aligning emerging technologies with human-centered and values-driven principles.